Step-by-Step Guide to Implementing Agentic RAG in Your Enterprise

In the current business environment, leveraging advanced technologies that are decisive for maintaining a competitive edge has become predominant. One such technology making waves among business circles is implementing RAG AI models for business automation. This innovative approach enhances enterprise automation processes quickly, allowing information retrieval and empowering enterprises to make more informed decisions.

Retrieval-Augmented Generation (RAG) in enterprise is the next big thing enterprises must look out for. This blog post will walk you through a comprehensive step-by-step guide to implementing Agentic RAG in your organization.

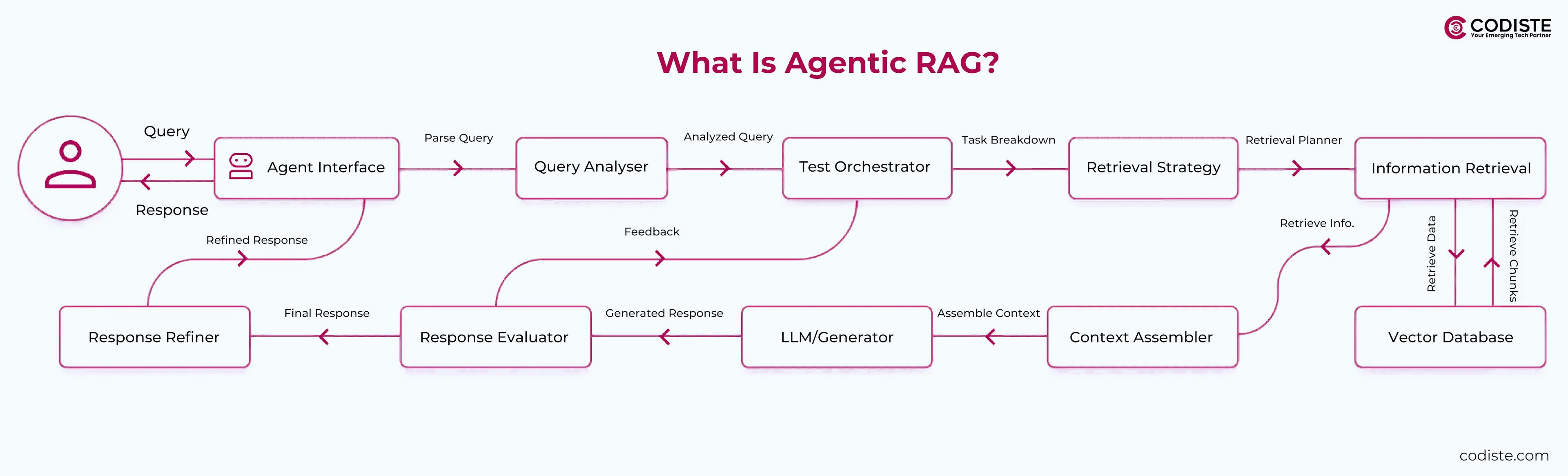

What is Agentic RAG?

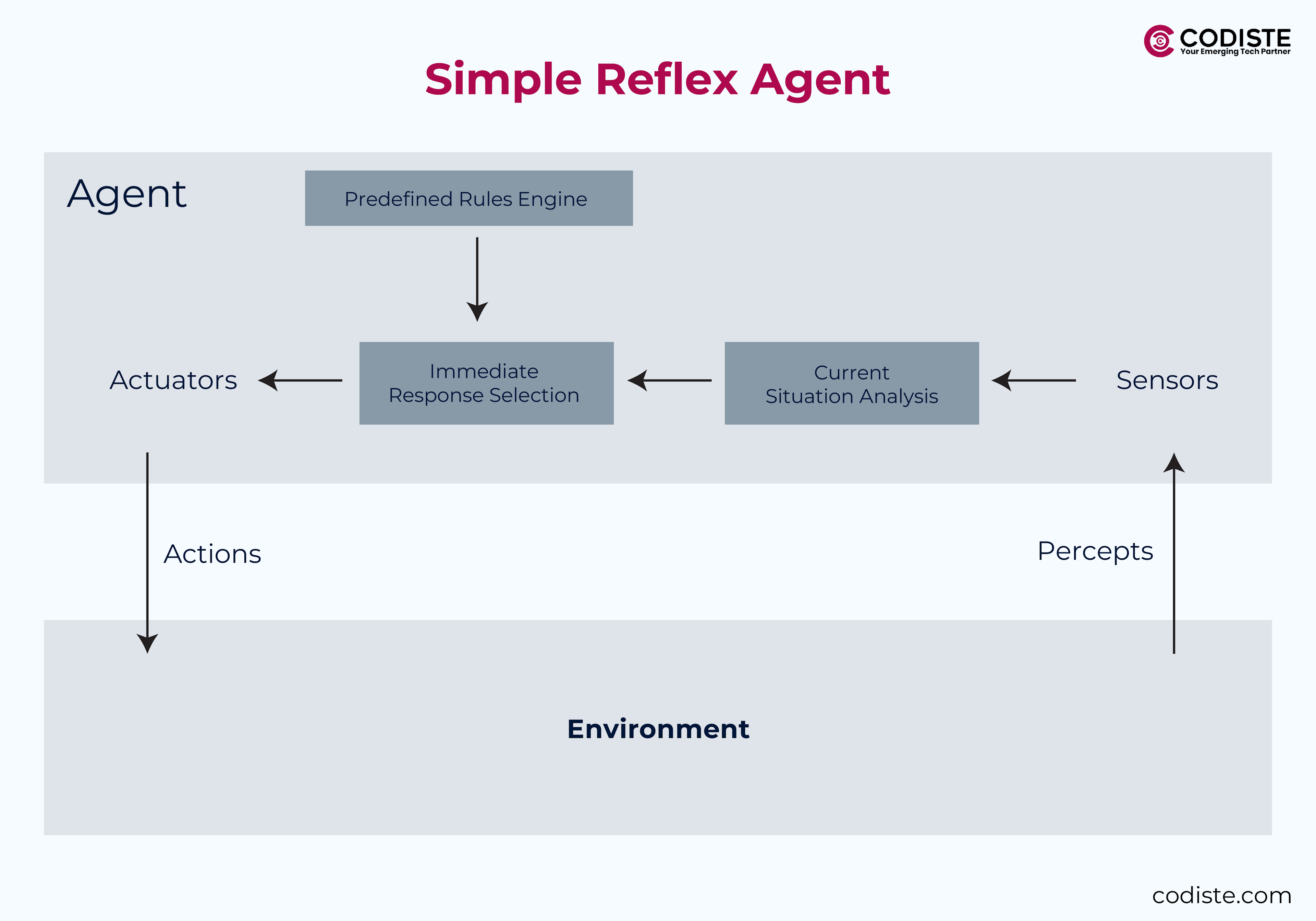

Before implementing RAG AI models, entities must grasp what Agentic RAG entails. Agentic RAG combines systems built upon traditional RAG frameworks that integrate intelligent agents that enhance information retrieval capabilities. These agents can process complex queries and provide more nuanced answers, making them invaluable in data-driven decision-making and automating business processes. Further below, we will see the process of agentic RAG implementation in enterprise.

Step 1: Define Objectives and Use Cases for Agentic RAG

Implementing RAG AI models in your enterprise begins with a crucial first step: defining clear business objectives and identifying the specific use cases where this technology can be of significant value. Let's take a closer look at how to approach this step effectively.

Identifying Key Tasks for Agentic RAG

Customer Support that Automates Complex Queries

One of the most impactful applications of Agentic RAG is in customer support. Enterprise AI automation with RAG helps businesses respond to complex customer queries by utilizing their contextual understanding capabilities. This improves the customer experience by providing timely and accurate information and reduces the human workload, allowing them to focus on more intricate issues.

These intelligent agents can analyze customer interactions and retrieve relevant information from a vast database, ensuring that responses are precise and contextually appropriate.

Knowledge Management to Streamline Access to Internal Documents

Accessing internal documents and databases is a tiring task. Agentic RAG streamlines this by providing employees rapid and efficient access to the necessary information. Integrating this with knowledge management systems can help employees find relevant data, reports, and documents without sifting through humongous databases. This capability saves time, improves productivity, and ensures employees get the correct information when needed.

Decision-Making to Generate Insights from Real-Time Data

Another robust use case for business applications of Agentic RAG is in decision-making processes. Agentic RAG's ability to generate insights to inform strategic decisions by analyzing real-time data—such as financial reports and market trends, allows organizations to respond promptly to fluctuating market conditions, making data-driven decisions that significantly impact enterprise success.

Start with a Pilot Project

Businesses must opt for a pilot project before fully scaling up on the Agentic RAG implementation. For instance, consider you are developing an internal HR chatbot that utilizes agentic RAG to assist employees with inquiries about standard policies, benefits, and procedures. This pilot can serve as a testing ground to improve and refine the technology, gather feedback, and demonstrate the value of agentic RAG within a controlled environment. Starting small can mitigate risks and ensure the system is efficient enough to meet your organization's needs before a broader rollout.

When enterprises clearly define these objectives and identify relevant use cases, they set a solid foundation for the successful implementation of Agentic RAG in your enterprise.

Step 2: Choose Components and Tools for Agentic RAG Implementation

Once the objectives are defined and potential use cases identified, the next crucial step is selecting the right tools and components that align with your business requirements. Below, we break down the key elements of an Agentic RAG system and recommended tools and frameworks for each.

1. Retrieval System

A robust retrieval system is essential for efficiently accessing and delivering relevant information. Here are some popular options:

- Elasticsearch

A powerful search engine widely used for full-text search and analytics. Its ability to handle large volumes of data and provide real-time search capabilities makes it an excellent choice among many. - Pinecone

This is a vector database specifically designed for machine learning applications. It provides a seamless way to store and query embeddings, ensuring that your retrieval system can quickly find the most relevant information based on user queries. - FAISS (Facebook AI Similarity Search)

Designed for efficient similarity search and clustering of dense vectors, FAISS is particularly useful when working with high-dimensional data. This makes it ideal for applications that require fast information retrieval based on semantic similarity.

2. Generative Model

The generative models are the heart of the Agentic RAG system, enabling them to generate insightful responses based on retrieved data. Consider the following options:

- GPT-4

As one of the most advanced language models, GPT-4 offers high-quality text generation capabilities, making it a powerful tool for creating human-like responses in various applications. - Gemini

This model is designed to generate contextually relevant content, which can enhance the performance of your RAG system by ensuring that generated responses are accurate and contextually appropriate. - Llama 3

An open-source alternative that provides flexibility and customization options for enterprises looking to tailor their generative models to specific applications. - Open-Source Alternatives

Consider models like Mistral and BERT for organizations that prefer open-source approaches. These models can be fine-tuned for specific tasks and integrated into your Agentic RAG system.

3. Agent Orchestration

Agent orchestration is critical for managing interactions between various components of your RAG system. Here are some tools that can help:

- LangChain

This foundational framework facilitates application development to leverage language models. It provides utilities for chaining together different components of your RAG system. - CrewAI

Perfect for multi-agent collaboration, this tool allows different intelligent agents to work together in a coordinated manner. This is particularly useful for complex queries that require input from multiple sources. - RAGapp

When the goal is to choose a no-code solution, RAGapp offers a user-friendly interface built on Docker, enabling users to deploy RAG applications without extensive programming knowledge.

4. Knowledge Base

A well-organized knowledge base is critical for information storage and retrieval. Depending on what your organization asks, consider the following options:

- Confluence

If the goal is to create, share, and manage documentation, this collaboration tool is an excellent choice. Its integration capabilities make it easier to link with other tools in your RAG system. - SharePoint

As a robust document management and storage system, SharePoint allows organizations to create custom workflows and manage documents efficiently. - Custom Document Repositories

Depending on your specific requirements, you might also opt for a custom-built repository tailored to your organizational structure and data needs.

Carefully selecting the right components and tools for your Agentic RAG implementation will ensure your system is equipped to meet your enterprise's unique challenges and objectives.

Automation is a new need; we are here to help.

Step 3: Data Preparation for Agentic RAG Implementation

Data preparation is essential in implementing RAG AI models. The data quality you use directly impacts your model's performance. This section explores the crucial data preparation components, including data gathering, cleaning, preprocessing, and indexing for efficient retrieval.

1. Data Gathering

The first step in data preparation is aggregating relevant data from various sources. This might include:

- Internal Documents

Collecting reports, manuals, and other vital documents that contain valuable information. These resources provide a wealth of knowledge that your RAG system can leverage. - FAQs

A goldmine for understanding common queries and concerns. By compiling these, you can ensure that your system can handle typical customer inquiries effectively. - CRM Data

CRM contains insights into customer interactions, preferences, and behaviors. This data helps customize responses and improve the relevance of generated content. - API Endpoints

If your organization has existing APIs that provide access to data, integrating these endpoints can enrich your RAG system with real-time information, enhancing its responsiveness and accuracy.

2. Data Cleaning & Preprocessing

The next step after data gathering is to clean and preprocess it to ensure quality and relevance. This involves several key actions:

- Remove Duplicates and Irrelevant Content

Start by identifying and eliminating duplicate entries that can skew results. Additionally, filter out any irrelevant content that does not contribute to the objectives of your RAG system. This step is crucial for maintaining a clean dataset that enhances model performance. - Using NLP Libraries for Tokenization and Entity Recognition

NLP libraries, such as spaCy, are invaluable for preprocessing tasks. Tokenization breaks down text into individual words or phrases, making it easier to analyze. Entity recognition identifies and categorizes key elements within the text, such as names, dates, and locations, which can be critical for generating contextually relevant responses.

3. Data Indexing

After cleaning and preprocessing your data, the final step is to index data for efficient retrieval. This involves converting your text into embeddings, numerical representations of the data that capture its semantic meaning. Here's how to do it:

- Convert Text into Embeddings

Use models like OpenAI’s text-embedding-3-small to transform your cleaned text into embeddings. These embeddings allow your RAG system to understand the relationships between different pieces of information, facilitating more accurate retrieval based on user queries. - Store in a Vector Database

Once the text is converted into embeddings, store these representations in a vector database. This setup enables rapid searching and retrieval of relevant information based on similarity, ensuring that your RAG system can respond quickly and accurately to user inquiries.

All these steps lay a solid foundation for agentic RAG implementation. A meticulous approach to data preparation is essential for maximizing the potential of your generative AI capabilities.

Step 4: Build the Agentic RAG Pipeline

Building an effective agentic RAG pipeline involves two main phases: retrieval enhancement and agent integration. Each phase ensures your system can efficiently fetch, process, and generate relevant information. Let's delve into the components of each phase.

Phase 1 – Retrieval Enhancement

Dynamic Retrieval

To maximize the effectiveness of your RAG system, configure agents to fetch context from multiple sources dynamically. This can include databases, APIs, and other data repositories. Tools like LlamaIndex provide a flexible framework for building knowledge assistants that connect large language models (LLMs) to your enterprise data. By leveraging these tools, your agents can access a broader range of information, enhancing the context for generating responses.

Hybrid Search

Implementing a hybrid search strategy is essential for improving accuracy in information retrieval. This approach combines traditional keyword-based search methods like BM25 with semantic search techniques. By integrating both methods, your system can better understand user queries and retrieve the most relevant information, whether it's based on exact matches or contextual relevance. This dual approach ensures that users receive comprehensive and accurate results.

Phase 2 – Agent Integration

Single-Agent Systems

You can utilize single-agent systems for more straightforward tasks, such as answering frequently asked questions (FAQs). An excellent example is LangChain's ConversationalRetrievalChain, which allows you to create a straightforward conversational interface. This system can efficiently handle user queries by retrieving relevant information and generating responses based on the context provided.

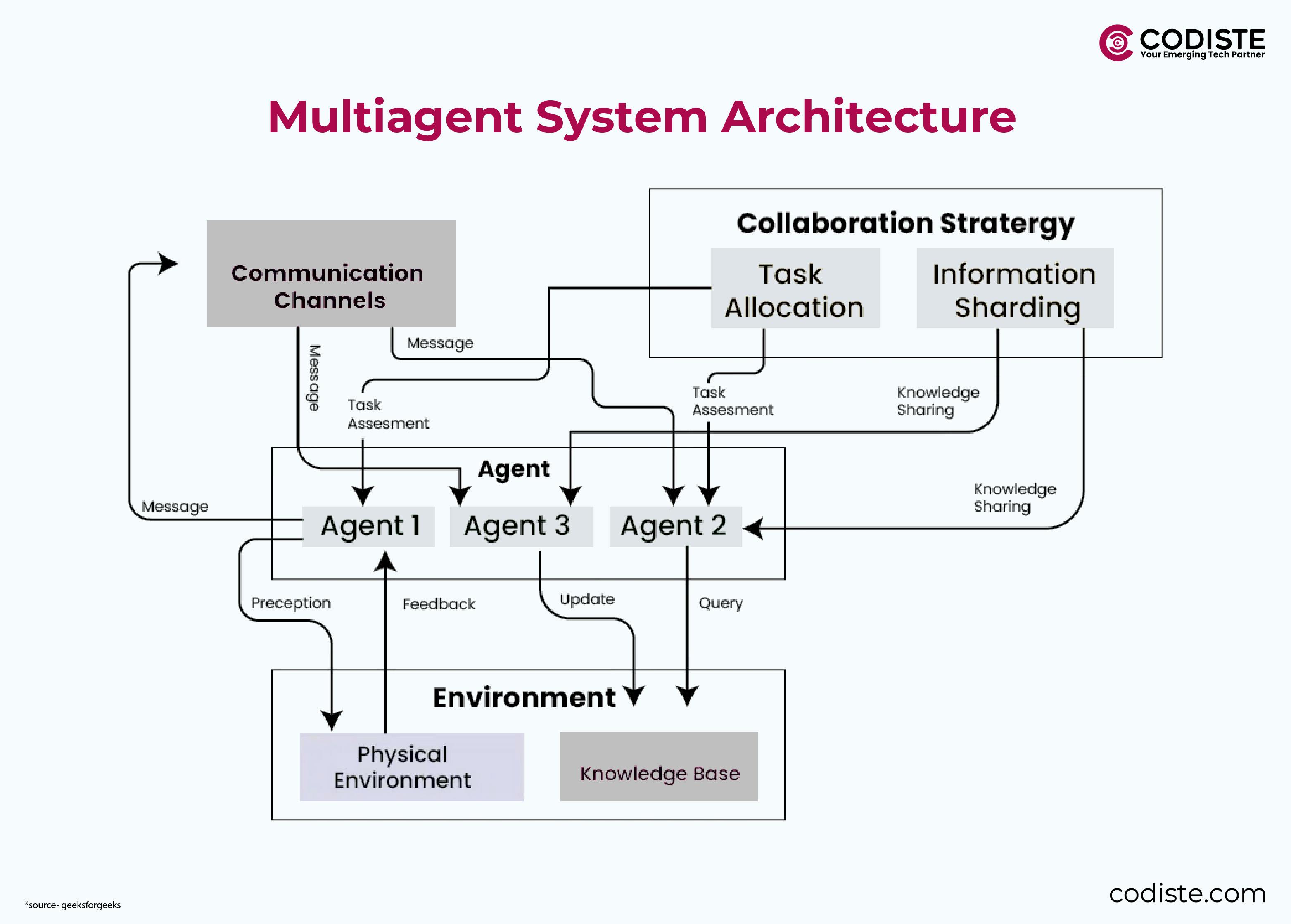

Multi-Agent Systems

Deploying multi-agent systems is beneficial for more complex workflows, such as customer onboarding processes. Tools like CrewAI enable the creation of specialized agents that can collaborate to handle intricate tasks. Here's how you can structure these agents:

- Query Decomposer

This agent breaks down complex questions into manageable sub-tasks, ensuring that each query component is addressed effectively. By decomposing queries, the system can tackle multifaceted inquiries more efficiently. - Retrieval Agent

Once the query is decomposed, the retrieval agent fetches relevant data from the indexed sources. This agent ensures that the information retrieved is pertinent to the specific sub-tasks the query decomposer identifies. - Synthesis Agent

Finally, the synthesis agent generates the final responses using generative models. This agent synthesizes the retrieved data into coherent, contextually relevant answers, providing users with comprehensive responses to their inquiries.

Step 5: Enable Advanced Features in Your Agentic RAG System

Enabling advanced features is essential to maximize the effectiveness of your Agentic RAG systems. These features enhance the system's ability to reason, integrate with external tools, and ensure the quality of generated outputs. This section will explore key components for enabling these advanced capabilities.

1. Adaptive Reasoning

Query Planning and Reranking

Utilizing frameworks like LlamaIndex can significantly enhance the reasoning capabilities of your RAG system. LlamaIndex allows for sophisticated query planning, enabling agents to analyze user queries and determine the best approach for retrieving relevant information. The system can prioritize the most pertinent results based on context and user intent by implementing reranking techniques, ensuring that users receive the most accurate and relevant responses.

Integrate Feedback Loops

Incorporating feedback loops is crucial for refining the responses generated by your RAG system. By allowing users to rate the quality of responses, you can gather valuable insights into the system's effectiveness. This feedback can be used to adjust the algorithms and improve the accuracy of future reactions. Continuous learning from user interactions helps the system evolve and adapt to changing user needs and preferences.

2. Tool Integration - Connecting to External Tools

Integrating your RAG system with external tools can significantly enhance its functionality and responsiveness. Here are some key integrations to consider:

- APIs

Connecting to external APIs, such as CRM systems like Salesforce, allows your agents to access real-time customer data, enhancing the relevance of responses. Additionally, integrating payment gateways can streamline transactions and improve user experience. - Databases

Whether using SQL or NoSQL databases, integrating these systems enables your RAG agents to retrieve structured data efficiently. This access to diverse data sources enriches the context for generating responses, leading to more informed and accurate outputs.

3. Post-Processing

- Validate Outputs with Rule-Based Checks

After generating responses, it's essential to implement post-processing steps to ensure the quality and reliability of the outputs. One effective method is to use rule-based checks that validate the generated content against predefined criteria. This can help identify and correct inaccuracies or inconsistencies in the responses, ensuring that users receive trustworthy information. - Add Source Citations for Transparency

Transparency is vital in building trust with users. By including source citations in the responses generated by your RAG system, you allow users to verify the information. This practice enhances credibility and empowers users to explore the sources for further context and understanding.

These advanced features in your Agentic RAG system enhance its capabilities, making it more adaptive, integrated, and reliable.

AI agents to reduce client waiting periods.

Step 6: Test and Iterate Your Agentic RAG System

Testing and iterating is crucial for ensuring system’s effectiveness and reliability. This phase involves validating the system with real users, addressing edge cases, and optimizing performance. Let's explore the key components of this step.

Validation

To assess the performance of your RAG system, it’s essential to conduct thorough testing with real users. This process involves measuring the accuracy of the generated responses using established metrics such as BLEU and ROUGE scores.

- BLEU Score

This metric evaluates the text quality generated by comparing it to one or more reference texts. A higher BLEU score indicates that the generated text closely matches the reference, reflecting better performance in tasks like translation or summarization. - ROUGE Score

Similar to BLEU, ROUGE measures the overlap between the generated text and reference text, focusing on recall. It is handy for evaluating tasks such as summarization, where capturing the essence of the original content is critical.

By employing these metrics, you can quantitatively assess your system's performance and identify areas for improvement.

Edge Cases

Addressing edge cases is a vital part of refining your RAG system. Edge cases often involve ambiguous or complex queries that may not be handled effectively by the current retrieval strategies.

To tackle these challenges, consider the following approaches:

- Refine Retrieval Strategies: Analyze the types of ambiguous queries users encounter and adjust your retrieval strategies accordingly. This may involve enhancing the semantic understanding of the queries or improving the context provided to the agents. By iterating on these strategies, you can ensure your system is better equipped to handle a broader range of user inquiries.

- User Feedback: Incorporate user feedback to identify common edge cases and refine your system based on real-world usage. This iterative process helps you adapt the system to meet user needs effectively.

Optimization

Optimization is key to enhancing the performance of your RAG system. One effective strategy is to implement semantic caching.

Semantic Caching

Using tools like Redis, you can store the results of frequently asked queries. This caching mechanism reduces latency for recurring queries, allowing your system to respond more quickly to users. When a user submits a query that has been previously processed, the system can retrieve the cached response instead of reprocessing the entire query, significantly improving response times.

This optimization enhances user experience and reduces the computational load on your system, making it more efficient overall.

Validation through established metrics, addressing edge cases, and optimizing for speed and efficiency are all critical components of this process.

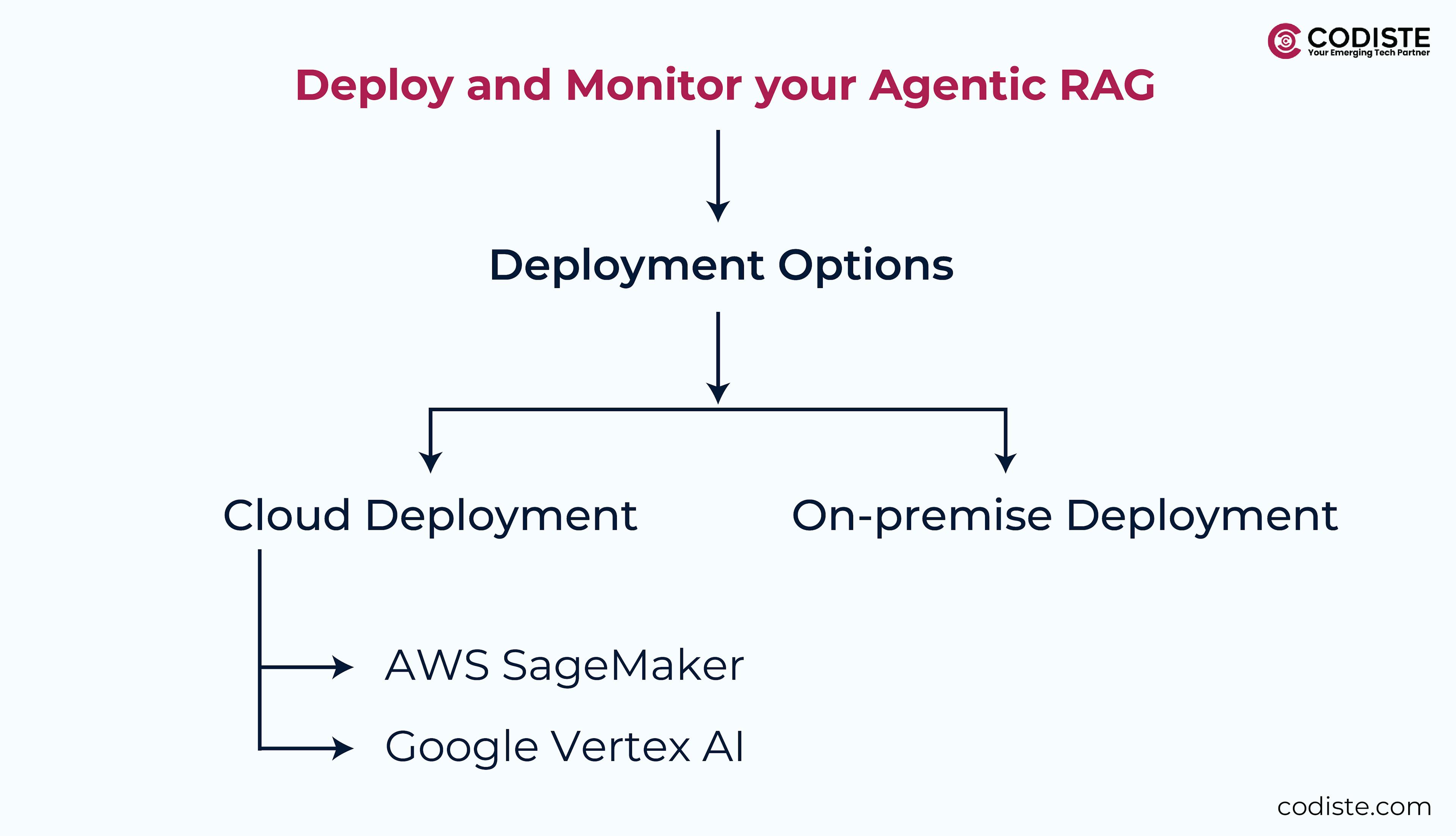

Step 7: Deploy and Monitor Your Agentic RAG System

After building and testing your agentic RAG system, the next critical step is deployment and monitoring. This phase ensures that your system operates effectively in a live environment and continues to meet user needs. Let's explore the key components involved in this step.

Cloud Deployment

Deploying your RAG system in the cloud offers scalability and flexibility. Two popular cloud platforms for deployment are:

- AWS SageMaker: This service provides a comprehensive suite for building, training, and deploying machine learning models. With SageMaker, you can easily manage the entire machine learning lifecycle, from data preparation to model deployment, making it an excellent choice for RAG systems.

- Google Vertex AI: Like SageMaker, Vertex AI offers tools for developing and deploying machine learning models. It integrates seamlessly with Google Cloud services, allowing for efficient management of your RAG system and enabling you to leverage powerful AI capabilities.

On-Premise Deployment

On-premise deployment is a viable option for organizations that prefer to maintain control over their infrastructure. Using Docker containers via RAGapp allows for a no-code setup, making it easier to deploy your RAG system without extensive programming knowledge. This approach provides the benefits of containerization, such as portability and scalability, while keeping your data secure within your infrastructure.

Monitoring

Once your RAG system is deployed, continuous monitoring is essential to ensure optimal performance and reliability. Here are some practical tools for tracking various performance metrics:

- Prometheus/Grafana

These tools work together to provide comprehensive monitoring solutions. Prometheus collects metrics from your RAG system, while Grafana visualizes this data through customizable dashboards. You can track key performance indicators such as latency and error rates to identify and address issues proactively. - LangSmith

This tool is designed to trace and debug large language models (LLMs). Integrating LangSmith into your monitoring strategy lets you gain insights into how your RAG system processes queries and generates responses. This visibility is crucial for diagnosing problems and optimizing performance over time.

Intelligent RAG Agents for all your business needs.

Future-Proofing Your Agentic RAG System

As technology evolves, it is essential to future-proof your agentic RAG system to maintain its relevance and effectiveness. Two key strategies for achieving this are leveraging edge computing and integrating multimodal capabilities.

Edge Computing involves deploying lightweight models, such as TinyLlama, on edge devices. This approach allows data to be processed closer to its source, significantly reducing latency and improving response times. The advantages of edge computing include:

- Reduced Latency

Local processing minimizes the time required to generate responses, which is particularly beneficial for real-time applications like customer support chatbots. - Lower Bandwidth Costs

Handling data processing locally reduces the need for extensive data transmission, leading to cost savings. - Enhanced Privacy and Security

Keeping sensitive data on local devices mitigates the risk of exposure during transmission, which is crucial for industries dealing with confidential information.

Multimodal Integration represents a significant advancement in AI capabilities, allowing systems to process and integrate multiple data types—text, images, and audio. This integration offers several benefits:

- Enhanced User Experience

By combining various data types, your system can provide more engaging and informative responses, such as visual aids alongside text answers. - Broader Application Scope

Multimodal capabilities open new possibilities for applications, such as e-commerce, where users can receive visual comparisons or video demonstrations. - Improved Contextual Understanding

Multimodal Large Language Models (MLLMs) can leverage context from different modalities to generate more accurate and relevant responses.

By implementing edge computing and multimodal integration, you can ensure that your Agentic RAG system remains competitive and capable of meeting evolving user needs. These strategies enhance performance and user experience and position your organization to leverage the latest advancements in AI technology. Embracing these innovations will help you stay ahead in a rapidly changing landscape, ultimately driving better user outcomes and satisfaction.

Conclusion

Implementing RAG AI models is more of a strategic approach that maximizes the benefits of advanced generative AI and also aligns with your organization's goals. By enhancing information retrieval processes and integrating dynamic retrieval with effective agent orchestration, you can significantly improve your enterprise AI automation with RAG and help improve your organization's ability to handle complex queries. Lets take a look at all the key takeaways after a successful agentic rag implementation in businesses.

Key Takeaways

- Enhanced Efficiency and Effectiveness

Properly gathered, cleaned, and indexed data ensure that your system delivers accurate and contextually relevant responses, improving user satisfaction and operational efficiency. - Improved User Experience

The robust Agentic RAG pipeline enhances the user experience by providing responsive and adaptive support, aligning with evolving user needs and expectations. - Better Decision-Making

By leveraging the full potential of generative AI, your organization can drive better decision-making processes through more accurate and insightful outputs. - Iterative Improvement

Rigorously testing and iterating your Agentic RAG system ensures it continuously meets user needs while improving performance over time. - Adaptability and Evolution

Effective deployment and monitoring of your Agentic RAG system, whether on the cloud or on-premises, enable your organization to adapt quickly to technological advancements and maintain high performance.

Embracing an iterative approach to Agentic RAG implementation allows your organization to fully harness the power of generative AI, leading to enhanced user satisfaction and better outcomes.

By proactively deploying and monitoring your system, you position your organization to thrive in a rapidly changing technological landscape. This strategic integration enhances operational efficiency and sets the stage for long-term success and adaptability.

If you are satisfied with this implementation guide, we can help you get real support and satisfaction with our agentic RAG development and deployment solutions. If your goal is to automate your business processes, connect with us today at Codiste

The Future of AI Automation: Agentic RAG...

Know more

How to Choose the Right AI Partner for D...

Know more

The Role of Agentic RAG in the Search, D...

Know more